10 Best IEEE Cyber Security Projects for Engineering Students

November 8, 2024

IEEE VLSI Projects: A Comprehensive Guide for Final Year Engineering Students

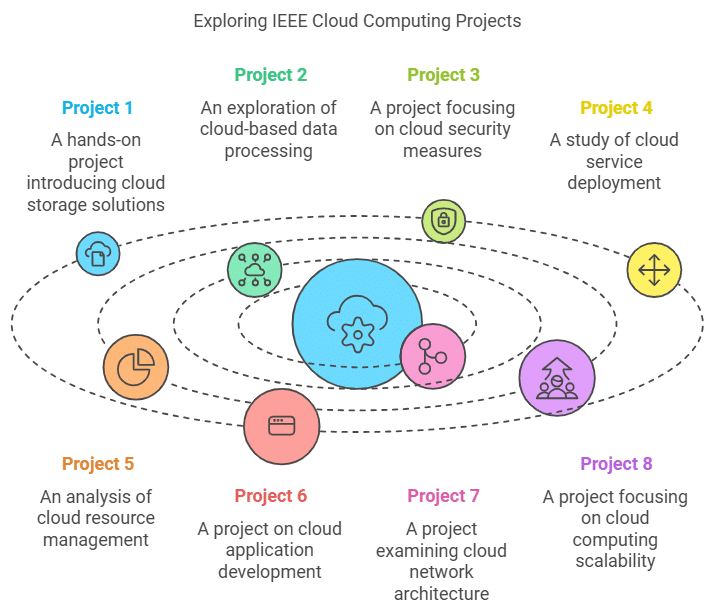

November 12, 2024Cloud computing has revolutionized the way businesses store, manage, and process data. With the increasing demand for scalable and secure computing solutions, IEEE Cloud Computing Projects have become an essential field of study for engineering students. From cloud service deployment to cloud security projects, this blog explores the top IEEE Cloud Computing Project Ideas that offer hands-on experience and innovation in the world of cloud technology.

In this post, we’ll dive into the best cloud computing project ideas for students, highlighting IEEE Cloud Computing Projects related to cloud infrastructure, application development, and cloud security. Whether you’re interested in deploying cloud services, securing cloud environments, or developing cloud-based applications, these projects will give you the necessary knowledge and skills.

Table of Contents

1. Cloud Service Deployment IEEE Cloud Computing Projects

Cloud service deployment is a fundamental part of cloud computing, allowing businesses to manage infrastructure, applications, and services without physical hardware. IEEE Cloud Service Deployment Projects focus on creating frameworks for deploying services to cloud environments like AWS, Google Cloud, or Microsoft Azure.

One great IEEE Cloud Computing Project Idea is to develop a cloud management platform that automates the deployment of services, applications, and databases. This IEEE Cloud Computing Projects can help students understand the various cloud models, including SaaS, PaaS, and IaaS, while also exploring service orchestration tools and cloud management systems.

At ElysiumPro, we guide students through cloud service deployment projects, helping them create scalable solutions for deploying and managing cloud-based applications and services, ensuring they gain practical experience with real-world cloud platforms.

2. Cloud Security Projects

Cloud security has become a critical issue as businesses increasingly move sensitive data to the cloud. IEEE Cloud Security Projects focus on building solutions that protect data, applications, and services hosted in the cloud. These IEEE Cloud Computing Projects explore various aspects of cloud security, including encryption, access control, identity management, and secure cloud communication.

A great project idea for cloud security would be to develop a secure cloud storage system with end-to-end encryption. This system would ensure that only authorized users can access files, and all data transferred to and from the cloud would be encrypted to protect it from unauthorized interception.

At ElysiumPro, we support students in implementing cloud security projects, helping them develop solutions that meet industry security standards, including multi-factor authentication and secure API design.

3. Cloud Application Development IEEE Cloud Computing Projects

Cloud Application Development has become an essential skill as businesses seek to build scalable, flexible, and high-performance applications in the cloud. IEEE Cloud Application Development Projects involve developing applications that run on cloud platforms, leveraging cloud services for storage, computing, and processing.

One IEEE Cloud Computing Projects idea is to create a cloud-based inventory management system that can handle real-time updates and large-scale data. This system would leverage cloud databases like Amazon RDS or Google Cloud SQL and integrate with AWS Lambda for serverless computing.

At ElysiumPro, we help students build robust cloud-based applications, offering guidance on development platforms such as AWS, Microsoft Azure, and Google Cloud, enabling students to develop scalable applications for real-world usage.

4. Cloud Infrastructure IEEE Cloud Computing Projects

Cloud infrastructure is the backbone of cloud computing, involving virtualized resources and storage systems that support cloud services and applications. IEEE Projects on Cloud Infrastructure explore creating solutions that optimize and manage the resources used by cloud services.

A potential IEEE Cloud Computing Projects in this category would be to build an auto-scaling system that automatically adjusts cloud resource allocation based on demand. This could involve using AWS EC2, Google Cloud Compute Engine, or Azure Virtual Machines to dynamically scale cloud resources to meet application needs.

ElysiumPro helps students understand cloud infrastructure management, ensuring they develop IEEE Cloud Computing Projects that focus on resource optimization and efficient cloud architecture that ensures scalability and high availability.

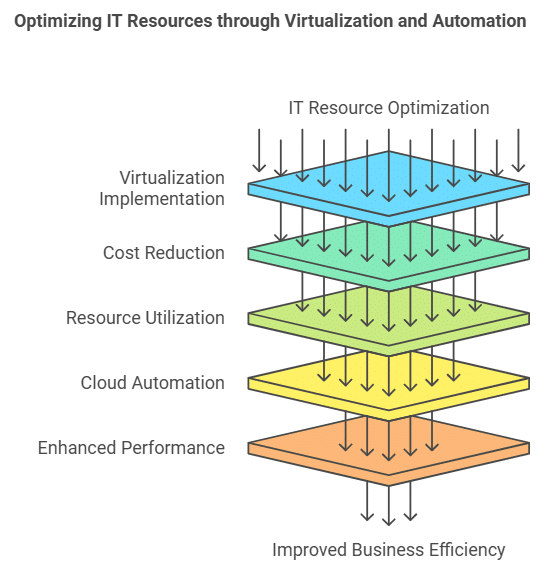

5. Virtualization and Cloud Automation IEEE Cloud Computing Projects

Virtualization and automation are key concepts in cloud computing, allowing businesses to optimize resource utilization and automate repetitive tasks. Students can develop IEEE Cloud Computing Projects that explore the automation of cloud tasks, including the deployment and management of virtual machines and containers.

One idea is to create a cloud automation platform that uses tools like Terraform or Ansible to automate the provisioning of cloud resources. This platform can streamline the deployment process, reducing the need for manual intervention and ensuring resources are allocated efficiently.

At ElysiumPro, we provide hands-on assistance for cloud automation IEEE Cloud Computing Projects, helping students automate cloud services and infrastructure deployment using industry-standard tools like Docker, Kubernetes, and Terraform.

6. Cloud Data Storage and Backup Solutions

Data storage and backup are critical components of cloud services, ensuring that large volumes of data are securely stored and readily accessible. IEEE Cloud Data Storage Projects focus on creating systems for managing cloud-based data storage, including backup solutions, disaster recovery plans, and data redundancy systems.

Students could work on a cloud backup solution that automatically backs up important files and ensures data is recoverable in case of an outage or disaster. This system would incorporate features like cloud snapshots, data encryption, and file versioning.

At ElysiumPro, we assist students with IEEE Cloud Computing Projects focused on cloud data storage, guiding them through creating secure and reliable backup systems that meet business continuity needs.

7. Cloud Monitoring and Performance Optimization IEEE Cloud Computing Projects

Cloud service performance is essential to ensure that applications and services run smoothly without delays or downtimes. Cloud Monitoring Projects focus on developing systems that track the performance of cloud services and optimize their use.

A IEEE Cloud Computing Projects idea could be to create a cloud monitoring tool that tracks the usage of cloud resources, such as CPU, memory, and bandwidth, and provides alerts when resource limits are about to be exceeded. The tool can also include automated performance tuning features, ensuring optimal cloud performance.

At ElysiumPro, we support students in developing cloud monitoring systems, teaching them how to track cloud resource performance and implement optimization strategies to improve service reliability.

8. Hybrid Cloud Solutions

Hybrid cloud solutions combine on-premises infrastructure with cloud services, providing a flexible computing environment. Students interested in this field can work on IEEE Cloud Computing Projects that create hybrid cloud systems that balance workloads between private and public clouds.

A IEEE Cloud Computing Projects idea could involve building a hybrid cloud management system that helps companies allocate resources between their private data centers and public cloud platforms. This would involve integrating on-premise applications with cloud-based services for better efficiency and scalability.

ElysiumPro helps students develop hybrid cloud solutions, ensuring they gain the skills necessary to build flexible, cost-efficient systems that balance the benefits of private and public cloud computing.

9. Cloud-Based IoT IEEE Cloud Computing Projects

The Internet of Things (IoT) and cloud computing are highly complementary, with cloud platforms providing the scalability and processing power needed to manage large numbers of connected devices. IEEE Cloud-Based IoT Projects explore integrating cloud technology with IoT systems to enable data collection, processing, and analysis.

One exciting project idea is to create a cloud-based IoT home automation system that allows users to control home devices, such as lights, security systems, and thermostats, from anywhere in the world. This project would use cloud services for data storage and remote access, integrating IoT devices with cloud-based control.

At ElysiumPro, we guide students through IoT cloud integration projects, teaching them how to connect IoT devices to cloud platforms like AWS IoT, Google Cloud IoT, or Microsoft Azure IoT Hub.

10. Cloud-Based Machine Learning Projects

Machine learning requires large datasets and processing power, which makes cloud computing the ideal platform for building machine learning models. IEEE Cloud Machine Learning Projects focus on leveraging cloud platforms to build and train models for predictive analytics, data mining, and artificial intelligence.

One project idea is to build a cloud-based predictive model that can analyze large datasets and forecast future trends. This could involve using cloud machine learning services like Google AI, AWS SageMaker, or Azure Machine Learning to build, train, and deploy the model.

At ElysiumPro, we assist students with cloud-based machine learning projects, helping them build powerful models that leverage cloud computing for fast and scalable analytics.

IEEE Cloud Computing Projects Table

| Project Idea | Key Skills Developed | Tools and Technologies Used | Outcome |

|---|---|---|---|

| Cloud Service Deployment | Cloud Platform Management | AWS, Google Cloud, Microsoft Azure | Efficient deployment of cloud services |

| Cloud Security | Data Encryption, Identity Management | OpenSSL, Cloud Security Tools | Secure cloud data storage and services |

| Cloud Application Development | Cloud Development, API Integration | AWS Lambda, Google Cloud Functions | Scalable cloud-based applications |

| Cloud Infrastructure | Resource Management, Auto-scaling | AWS EC2, Google Compute Engine | Build efficient cloud infrastructure |

| Cloud Automation | Cloud Resource Automation | Terraform, Ansible, Docker, Kubernetes | Automate cloud service provisioning |

Cloud Computing Project Checklist

- Understand the Cloud Platform: Familiarize yourself with the cloud platform you will be using, such as AWS, Google Cloud, or Azure.

- Choose the Right Tools: Use industry-standard tools like Terraform for automation or AWS Lambda for serverless computing.

- Ensure Scalability: Make sure your solution can handle increased workloads and scale efficiently.

- Test for Security: Implement strong security features such as encryption and access control for cloud services.

- Document Your Process: Keep track of your project’s progress and document your design, development, and testing phases.

FAQ

1. What is the best IEEE Cloud Computing project for beginners?

For beginners, cloud service deployment and cloud application development projects are great starting points. These projects introduce you to cloud platforms and basic cloud concepts such as scaling, resource management, and security. ElysiumPro provides excellent guidance for beginners looking to explore the world of cloud computing.

2. Can I work on cloud security as an IEEE project?

Yes, cloud security is one of the most important areas in cloud computing. Students can work on developing encryption tools, access control mechanisms, and secure cloud communication systems. ElysiumPro can assist you in developing robust cloud security projects to meet industry standards.

3. How do I select the right cloud platform for my project?

When selecting a platform, consider the features and tools each cloud service provides. AWS, Google Cloud, and Azure are the most commonly used platforms. ElysiumPro helps students choose the most appropriate platform for their cloud computing projects, ensuring they have access to the best tools and services.

4. What tools are essential for cloud-based machine learning projects?

For cloud-based machine learning projects, tools such as Google AI, AWS SageMaker, and Azure Machine Learning are essential for building, training, and deploying machine learning models. ElysiumPro offers hands-on guidance for implementing these tools in your cloud machine learning projects.

5. How do cloud infrastructure projects help in real-world applications?

Cloud infrastructure projects help students understand how cloud resources are managed, optimized, and scaled. These projects teach students about building resilient systems capable of handling large-scale deployments. ElysiumPro guides students in creating efficient cloud infrastructure solutions that can be applied in real-world scenarios.

Related Blogs:

Python Projects | Internet of things projects | Networking Projects | Web Development Projects | Amazing Final year projects | Software Engineering Projects | Machine Learning Projects | Cloud Computing Projects | MatLab Projects | Engineering Projects | Java Projects | Wireless Networking Projects | Power Electronics Projects